This ensures that the ImportError table is cleaned every day. It is enough that you place the Dockerfile and any files that are referred to (such as Dag files) in a separate directory and run a command docker build.-pull-tag my-image.

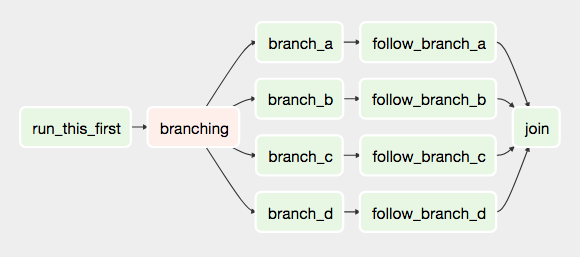

You can build your image without any need for Airflow sources. A maintenance workflow that you can deploy into Airflow to periodically delete DAG files and clean out entries in the ImportError table for DAGs which Airflow cannot parse or import properly. The DAGs in production image are in /opt/airflow/dags folder.The first illustrates the high-level Airflow DAG containing two nodes. Similarly, the same Hamilton data transformations can be reused across different Airflow DAGs to power dashboards, API, applications, etc. A maintenance workflow that you can deploy into Airflow to periodically clean out the task logs to avoid those getting too big. For example, a single Airflow DAG can be reused with different Hamilton modules to create different models.This is useful because when you kill off a DAG Run or Task through the Airflow Web Server, the task still runs in the background on one of the executors until the task is complete. pool support: prefork, eventlet, gevent, blocking: threads/solo (see note) broker.A maintenance workflow that you can deploy into Airflow to periodically kill off tasks that are running in the background that don't correspond to a running task in the DB.A maintenance workflow that you can deploy into Airflow to periodically clean out the DagRun, TaskInstance, Log, XCom, Job DB and SlaMiss entries to avoid having too much data in your Airflow MetaStore.This ensures that the DAG table doesn't have needless items in it and that the Airflow Web Server displays only those available DAGs. A maintenance workflow that you can deploy into Airflow to periodically clean out entries in the DAG table of which there is no longer a corresponding Python File for it.Notice that you should put this file outside of the folder dags/. The DAG from which you will derive others by adding the inputs. It was tested with Airflow v2.3.2 (at latest. We use Airflow’s internal behavior (which passes the dagid in args) for optimizing our loading time. The first step is to create the template file. Photo by Veri Ivanova on Unsplash Observations. A maintenance workflow that you can deploy into Airflow to periodically take backups of various Airflow configurations and files. Maybe one of the most common way of using this method is with JSON inputs/files.A series of DAGs/Workflows to help maintain the operation of Airflow DAGs/Workflows

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed